Choose your operating system:

Windows

macOS

Linux

Issues with Accurate Timing and Latency in Audio Rendering

In an audio engine, for CPU performance reasons, audio samples are rendered in buffers , and submitted individually to output hardware—a digital audio converter (DAC). A number of reasons make this the only feasible way to render audio in real time on a CPU—CPU cache coherency, hardware API overhead, and so on.

These buffers typically contain hundreds or even thousands of samples at a time. It is important to understand that audio engine rendering commands are typically consumed at the beginning of an audio buffer render. A command, for example, might be to play a new sound, stop an old one, or change the parameter of a sound like volume or pitch.

Because of this, the size of the rendered audio buffer directly controls the rate at which new commands are consumed. This rate also describes the perceived audible latency of any issued commands. For example, if you were to trigger an explosion VFX and an explosion sound on the same game-thread tick, the latency between seeing the VFX explosion and hearing the sound is determined by this buffer size.

This means that for a buffer that contains 2048 samples rendered at 48k samples per second (kHz), the buffer would result in an audible latency of up to 43 milliseconds (ms).

In addition to this inherent latency with an audio renderer due to the render buffer size, audio commands issued from the game thread take time to get to the audio engine due to game-thread and audio-thread ticks, and general thread communication overhead. If a threading or gameplay latency is 13 ms (a feasible number), and we use the same buffer size of 2048 samples at 48 kHz, and if the command just missed the start of the current buffer being rendered, there will be a worst-case accumulated latency of 56 ms.

If an audio request comes in after a buffer has started to render ( 1 ), then the request will carry forward without playing ( 2 ) until the beginning of the next buffer ( 3 ).

To complicate matters, game-thread ticks are highly variable (at least from the perspective of an audio renderer), and are often susceptible to arbitrary hitches—during garbage collection, loading assets, and so on.

Game-thread ticks are also timed for gameplay and graphical rendering, not for audio rendering and audio timing. If you need to play a sound at a precise moment in time (exactly when a different sound is finishing or starting), calling that command from the game thread will not work because the game-thread and audio render-thread event timings are decoupled.

These issues of variable latency and game-thread-dependent timing of audio events are not significant for the majority of audio applications. Depending on the CPU load of a game or the constraints of a particular platform, it is usually possible to tweak the buffer sizes and number of buffers rendered ahead of time to a size where most timing issues are below the threshold of perception.

However, for some audio applications, such as interactive music, precisely timed repeating weapon fire, or other rhythmically-dependent audio, these issues are a significant problem.

Quartz and Sample Accuracy

Sample accuracy is the ability for a sound to start at an arbitrary sample in the audio renderer (such as the middle of a buffer) instead of on a buffer boundary.

Quartz is a system that works around the issues of variable latency and game-thread timing incompatibility by providing a way to accurately play any sound sample. Sample accuracy refers to the ability for a sound to render audio at an arbitrary sample (point in time) within an audio buffer rather than at the beginning of the buffer.

Quartz provides a way of scheduling an audio call mid-buffer instead of having to delay the audio render until the beginning of the next buffer.

Instead of rendering a sound at the beginning of an audio buffer, Quartz cues the sound to play on the desired musical value (bars or beats) or time value (seconds), independent of the buffer size, game-thread timing, or other sources of variable latency.

This has many applications—from creating dynamic music systems, to controlling the playback of rhythmic and timing-dependent sounds such as sub-machine gunfire.

How Quartz Works

With Quartz, you can give the audio engine advance warning by scheduling a sound with

PlayQuantized()

, which executes the play command at a specific sample in the audio render. By scheduling ahead of time, you account for the delay so that sounds can render on exactly computed samples to make the queued-up sounds play as though there were no delay.

The buffer size is also referred to as the audio buffer callback size, or callback size for short.

A

represents the game thread, with X-axis demarcations indicating game frame ticks, while

B

represents the audio render thread with X-axis demarcations indicating rendered audio buffers. Their respective X-axes vertically line up in real-world time.

(

1

) When the game thread calls

PlayQuantized()

, it requests that the sound be played on a given quantization boundary (such as the next quarter note), as calculated to the nearest audio output sample on the audio render thread. (

2

) Quartz issues this request in the form of a generic

Quantized Command

, and queues the sound for audio rendering at a future point in time. The request is held through multiple audio buffers on the audio render thread (

3

) by the computed amount. When it is time to render the sound in the computed buffer, Quartz feeds the audio through an integer delay that prevents it from rendering at the beginning of the buffer (

4

) and instead renders partway into the output buffer on the sample needed to play exactly on the quarter note (

5

).

Quartz also works with Blueprints . Blueprints provide a way to apply these quantized commands and timing events, which in turn trigger gameplay logic in sync with the quantized actions occurring in the Audio Mixer .

Main Components in Quartz

Quartz Clock

A clock is the object in charge of scheduling and firing off events on the audio rendering thread. A clock is created with the Quartz Subsystem , and modified via a Blueprint using Clock Handles . Each clock has a Quartz Metronome .

Quartz Metronome

The metronome is the audio render thread object that tracks the passage of time, and decides when upcoming commands need to be executed (from user-provided information such as BPM (beats per minute) and a time signature.

Gameplay logic can subscribe to events on the metronome to be notified when musical durations occur.

Quartz Clock Handle

The Clock Handle is the gameplay-side proxy of an active clock . This is acquired via the Quartz subsystem , and used to control the clock running in the Audio Mixer.

Quartz Subsystem

While the Clock Handle provides access to functionality on a specific clock, the Quartz subsystem provides access to functionality that does not relate to a specific clock. This includes creating and getting clocks, seeing if a clock exists, and querying latency information.

AudioComponent: PlayQuantized()

This is a new function added to Audio Components that provides a way to play a sound on a specific clock, with a specified Quantization Boundary .

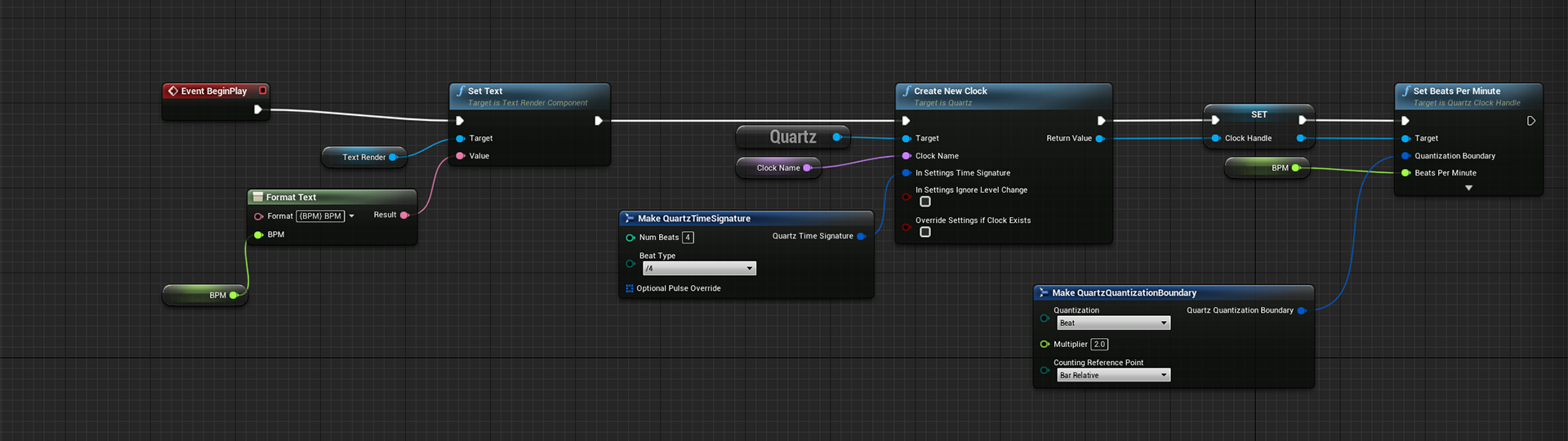

Create and Initialize Quartz Clocks

Below is a sample workflow:

-

To create a clock, call Create New Clock on the Quartz Subsystem .

-

Provide a Clock Name and a Time Signature .

-

Store the return value as a Blueprint variable. (If a clock by the same name already exists, this will return a handle to the existing clock.)

-

Set the Tick Rate for the clock by calling one of these functions on the Clock Handle :

-

Beats per Minute (shown below)

-

Milliseconds per Tick

-

Ticks per Second

-

Thirty-second Notes per Minute

-

The calls in Step 4 are technically interchangeable; the variations are for convenience, but it is worth noting that each has its own corresponding getter .

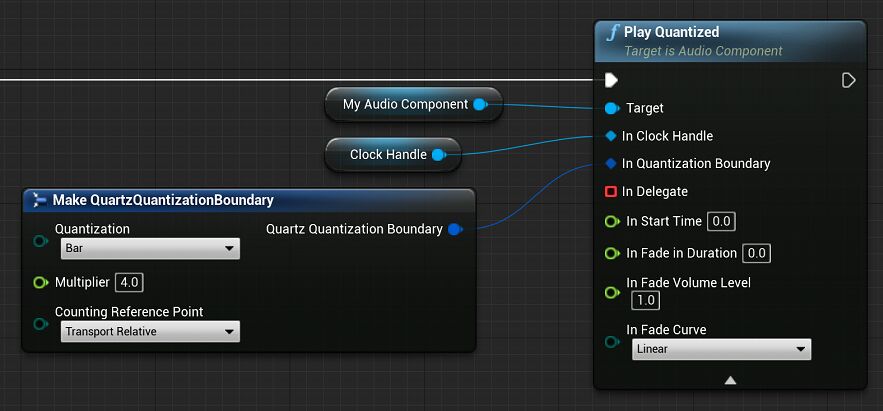

Play Sounds on a Quantization Boundary

To play a sound on a Quantization Boundary, call

Play Quantized

on an

Audio Component

, and provide a Clock Handle and Quantization Boundary.

The Quartz Quantization Boundary is how you tell the Audio Mixer exactly when you want the sound to begin. This is used in a few other scheduling-related Quartz functions. There are several options in this struct.

Quantization is the time value to be used for scheduling. Possible durations include:

|

Duration |

Description |

|---|---|

|

Bar |

Automatically determined by time signature. |

|

Beat |

Defaults to the denominator of time signature, but can be overridden. |

|

1/32 |

Thirty-second notes are the smallest duration available. |

|

1/16, (dotted) 1/16, 1/16 (triplet) |

Sixteenth note, dotted sixteenth note, and sixteenth note triplet. |

|

1/8, (dotted) 1/8, 1/8 (triplet) |

Eighth note, dotted eighth note, and eighth note triplet. |

|

1/4, (dotted) 1/4, 1/4 (triplet) |

Quarter note, dotted quarter note, and quarter note triplet. |

|

Half, (dotted) Half, Half (triplet) |

Half note, dotted half note, and half note triplet. |

|

Whole, (dotted) Whole, Whole (triplet) |

Whole note, dotted whole note, and whole note triplet. Whole notes are the largest duration available. |

|

Tick |

On Tick is the smallest value—same as thirty-second note. |

Multiplier is the number of Quantization time values until the sound should play. This is a multiplier of the Quantization time value selected.

Counting Reference Point is the reference point used for scheduling the event. Different use cases will want to use different reference points.

-

Bar Relative —From the start of the current bar. For example, with a drum machine:

-

Setting Quantization to Beat and Multiplier to 2.0 will schedule the event for the next Beat 2 (second beat in the measure) that occurs.

-

If the clock is set on Beat 1 of 4 beats to the bar , then the event will trigger on the next beat.

-

If the clock is set on Beat 3 of 4 , then the event will trigger on Beat 2 of the next bar.

-

-

Transport Relative —From the time the clock was started, or the transport was last reset. For example, when triggering a musical stem on a 4-bar boundary:

-

This is used to trigger clips/stems relative to the Transport (song counter).

-

If the clock is on Bar 6, then the event will trigger on Bar 9 (Transport counting starts from Bar 1).

-

-

Current Time Relative —From the current time. For example, for triggering stingers as soon as makes musical sense:

-

Quantization set to Beat and Multiplier to 2.0 will schedule the event for the downbeat after next.

-

If the clock is on Beat 1 of 4, then the event will trigger on Beat 3.

-

If the clock is on Beat 3 of 4, then the event will trigger on beat 1 of the next bar.

-

With Quartz, a bar is not always a whole note, and a beat is not always a quarter note. See Time Signatures and Pulse Overrides below for more information.

Subscribe to Command Events

When you want to synchronize gameplay logic with a quantized event (such as the onset of a sound you have scheduled to play), you can get notified at different stages of that particular command's life cycle.

To do this, drag a pin off the In Delegate argument for these kinds of functions and create a custom event :

The custom event may be called multiple times for different reasons. In the pictured example, you can break on the Event Type

EQuantizationCommandDelegateSubType

Enum to respond to the following events:

|

Event Type |

Description |

|---|---|

|

Failed To Queue |

The command did not make it to the audio render thread (Play Quantized likely failed concurrency). |

|

Queued |

The command has been received by the audio render thread, and is awaiting execution (concurrency passed) |

|

Canceled |

The command was canceled on the audio render thread before it was executed (Stop was likely called on the Audio Component before it started playing). |

|

About to Start |

The command is about to be executed (this is currently called on the same gameplay frame as Started, but in the future this should be called early enough to compensate for inter-thread latency). |

|

Started |

The command has been executed on the audio render thread. |

Subscribe to Metronome Events

In addition to subscribing to various stages of a Quantized Command life cycle to synchronize gameplay with audio rendering, you can also get notified each time a Quantization Duration occurs.

In the example below, we subscribe to a bar notification. Each time our event fires, we schedule a sound to play on the following bar:

If you want access to all Quantization Durations in a single event, you can subscribe to all the Quantization Events at once and switch on your Quantization Type Enum:

Time Signatures and Pulse Overrides

Quartz allows for finer control over time signature and meter. As seen in Create and Initialize Quartz Clocks above, when creating a clock, you will need to provide a Time Signature .

A Time Signature has three fields. The first two represent an ordinary time signature:

-

Num Beats: The number of beats (of the type specified under Beat Types) per measure, or the numerator of the time signature.

-

Beat Type: Enum representing the duration that gets the beat, or the denominator of the time signature. Possible values are:

Enum

Value

/2

Half note

/4

Quarter note

/8

Eighth note

/16

Sixteenth note

/32

Thirty-second note

-

Optional Pulse Override , which is the third field, provides a way to specify which durations get the beat throughout the bar. If no Optional Pulse Override is provided, it defaults to Beat Type from the Time Signature.

The Bar Quantization Boundary is intuitively governed by the Time Signature. In 4/4 time, a bar lasts 4 quarter notes, while in 12/8 time, a bar would last 12 eighth notes.

The Pulse Override argument is actually an array of pulse overrides. Each entry lets you specify the Number of Pulses desired at the specified Duration before moving on to the next entry in the array. See the example below:

The Time Signature is 7/8. Without providing an Optional Pulse Override, the Beat Quantization Boundary would be the same as the /8 (eighth note) Quantization Boundary.

But with Quartz, you can specify exactly how long your beats (or pulses) are. In this example, there are two entries in the array, shown under Default Value on the Details panel:

For the 0 value :

-

Number Of Pulses: 2

-

Pulse Duration: 1/4

This means our first 2 beats will be a quarter-note in duration.

For the 1 value :

-

Number Of Pulses: 1

-

ulse Duration: (dotted) 1/4

This means the last beat will be a dotted quarter note in duration.

This enforces a count of ( 1 , 2) ( 1 , 2) ( 1 , 2, 3), where each bold 1 is the eighth note that gets the beat. If your Blueprint subscribes to the Beat Quantization Boundary, this is when that event will be fired.

If you switch the entries around, the count becomes ( 1 , 2, 3) ( 1 , 2) ( 1 , 2).

If the values provide a duration shorter than a bar, the last entry is duplicated to reach the correct length.

If the values provided represent a duration longer than a bar, the list will be truncated, and counting will restart from the beginning of the list on the downbeat of the next bar.