Choose your operating system:

Windows

macOS

Linux

Goals

In this Quick Start guide, you will go through the steps to calibrate lens distortion and the nodal point offset , using the Camera Calibration plugin in a production environment.

Objectives

-

Connect your camera to Unreal Engine (UE) so it provides a live video feed.

-

Calibrate lens distortion using the Camera Calibration plugin.

-

Calibrate the nodal point offset of the tracking system to the lens using the Camera Calibration plugin.

1 - Required Setup

For this guide, you will use a production camera, an optical camera tracking system, and an AJA Kona 5 for source video input.

A master clock is optional to supply a timecode and sync to the AJA Kona 5. In a production environment, it is recommended that the production camera and the camera tracking system be supplied with the sync and timecode as well. You will need them if you want to proceed with a CG composite over live video to make sure the layers are synchronized. Optionally, you can stream the focus, aperture, and focal length to UE using the Preston Live Link Plugin or the Live Link Master Lockit Plugin.

-

Create a new Unreal Engine project. Select the Film, Television, and Lived Events category and click Next .

![Select the Film, Television, Live Events category]()

-

Select the Virtual Production template and click Next .

![Select the Virtual Production template]()

-

Enter the file location and project name, and click Create Project .

![Click Create Project]()

-

Once the editor is loaded, click Settings > Plugins to open the Plugins Menu .

![Open the Plugins Menu]()

-

Select the Virtual Production category and enable the Camera Calibration and LiveLink plugins. Select Yes on the pop-up box and click Restart Now to restart the editor.

![Enable the Camera Calibration plugin]()

![Enable the LiveLink plugin]()

![Click Restart Now]()

Section Results

You enabled the Camera Calibration and LiveLink plugins and restarted the editor. You are now ready to continue with the calibration process.

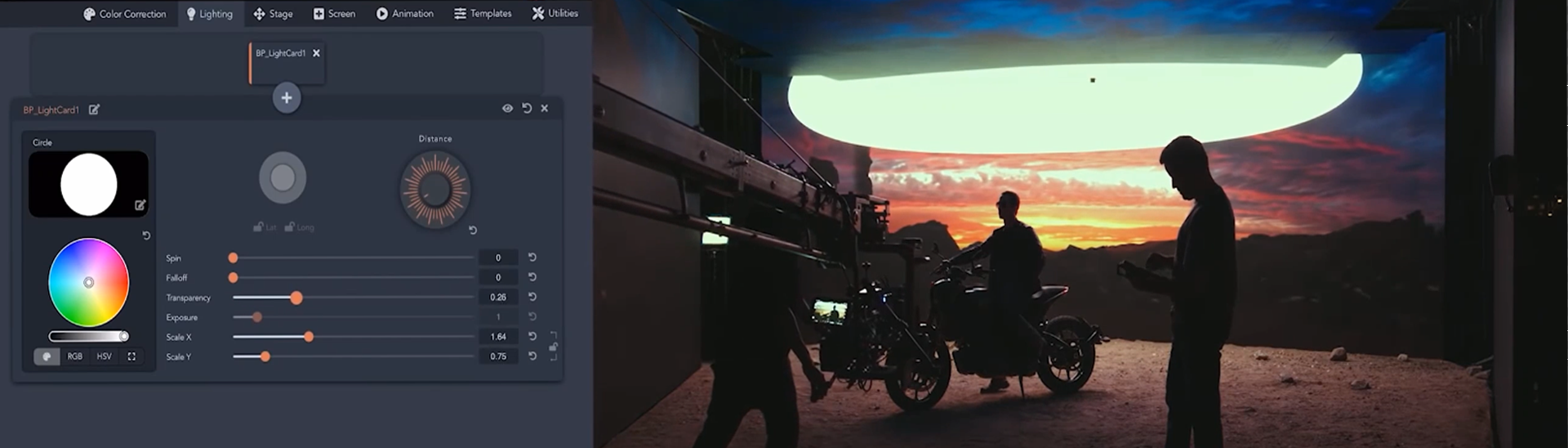

2 - Creating your Media Profile

You can use a Media Profile to set up video source input and output, as well as the timecode and sync format. In this section, you will create a new Media Profile to set these parameters.

You will only need the video source from the production camera to set lens distortion and nodal offset calibration.The timecode and sync are not required, but it is important to know how to set them up in a Media Profile for synchronizing a CG composite to a video source.

-

Click Media Profile > New Empty Media Profile to open the Media Profile window.

-

Select MediaProfile and click Select .

-

Choose a destination folder and enter a name for your profile.

-

Click Save .

![Create a new empty Media Profile]()

![Select Media Profile]()

![Enter a name and click Save]()

-

-

Expand the Media Sources category under Inputs and click on the MediaSource-01 dropdown. Select the Aja Media Source option.

![Select the Aja Media Source option]()

-

Expand the AJA category and click the Configuration dropdown. Select your Device , Source , Resolution , Standard , and Frame Rate . Click Apply .

![Choose your configuration]()

-

Click the Timecode Format dropdown and select your format. LTC is the timecode embedded in the video source.

![Choose your Timecode format]()

-

Enable the Is sRGBInput checkbox to convert YUV to RGB.

![Enable sRGB Input]()

Follow the Timecode Setup and Genlock Setup sections if you are connecting the AJA Kona 5 to a master clock and receiving Timecode and Genlock.

Timecode Setup

-

Scroll down to the Timecode Provider section.

-

Enable the Override Project Settings checkbox.

-

Click the Timecode Provider dropdown and select AJA SDI Input from the list.

-

Enable the Use Dedicated Pin checkbox (this is the Dedicated TC cable on the breakout cable attached to the AJA Kona 5).

![Enter your Timecode Provider settings]()

-

-

Click the LTConfiguration dropdown to expand it and select your Device , LTC Index , and Frame Rate . Click Apply .

Genlock Setup

-

Scroll down to the Genlock section.

-

Enable the Override Project Settings checkbox.

-

Click the Custom Time Step dropdown and select AJA SDI Input from the list.

-

Enable the Use Reference in checkbox.

![Enter your Genlock settings]()

-

-

Click the Configuration dropdown and select your Device , Source , Resolution , Standard , and Frame Rate . Click Apply .

-

Click Apply and Save on the toolbar to save your changes.

![Apply and Save]()

Section Results

In this section you created a new Media Profile, and configured the video source input. You also added the optional Timecode and Genlock for syncing if a master clock is being used at this stage.

3 - Creating a Lens File

The Lens File provides the calculation and storage of distortion and nodal offset data, specific to each lens-to-camera combination. We recommend you create a new Lens File if the lens, tracking object location, or the camera body changes.

-

Right-click in the Content Browser and click Miscellaneous > Lens File to create a new Lens File.

![Create a new Lens File]()

-

Double-click the Lens File in the Content Browser to open it.

![Open the Lens File]()

-

Under the Viewport Settings section, change the Transparency to 0.0 . This controls the transparency of the CG elements over the video source image.

-

A Transparency of 0 hides all CG elements.

-

A Transparency of 1 shows the CG elements only.

![Set Transparency to 0.0]()

-

-

Click the Camera dropdown and select the connected camera from the list. Click the Media Source dropdown and select the Media Profile you created earlier.

![Select the Media Profile]()

-

Under the Lens Info section, enter the Lens Model Name. A recommended naming convention combines the camera body name and the focal length.

-

Add the Lens Serial Number if available. Add the Lens Model (only spherical lens models are supported at this time). Enter the Sensor Dimensions to match your camera.

![Enter the Sensor Dimensions]()

-

Click Save Lens Information .

A new Lens File needs to be created for each lens-to-camera body combination.

Properties Summary

At the bottom of the Lens File window you will find a summary of the current properties applied to the Lens File. Most of the properties show as blank or N/A at this stage. These properties will update as you continue with the calibration process.

Section Results

In this section you created a new Lens File and added the lens and camera information.

4 - Creating your Virtual Live Link Subject

A Virtual Live Link Subject is used to manually input the focus, aperture, and focal length values in the absence of a device that is used to stream these values. If you are using a Preston MDR Unit (Section 7) or an Ambient Master Lockit Plus device (Section 8) to stream lens data to UE, proceed to the relevant section to set these up.

In this section, you will create a new Live Link Virtual Subject to manually input the values for focus, iris, and zoom.

-

Right-click in the Content Browser and select Live Link > Blueprint Virtual Subject .

-

Select the Live Link Camera Role from the dropdown and click OK**.

-

Double-click the virtual subject asset in the Content Browser to open it.

![Right-click and select Live Link and Blueprint Virtual Subject]()

![Select the Live Link Camera Role]()

-

-

Click + Variable to add a new variable. Name the variable Focus . Go to the Details panel and set the Variable Type to Float . Enable the Instance Editable checkbox.

![Add a new variable]()

![Name it Focus and set it as Float]()

-

Repeat the above step twice to create two additional Float variables named Zoom and Iris .

![Create Zoom and Iris]()

-

Right-click in the Event Graph then search for and select Update Virtual Subject Static Data .

-

Connect the Update Virtual Subject Static Data node to the Event On Initialize node.

-

Right-click the Static Data pin in the Update Virtual Subject Static Data node and select Split Struct Pin .

![Add Update Virtual Subject Static Data node]()

![Connect the Update Virtual Subject Static Data node to the Event On Initialize node]()

![Right-click the Static Data pin and click Split Struct]()

-

-

Enable the Focal Length , Aperture and Focus Distance checkboxes, as seen below.

![Enable Focal Length, Aperture, and Focus Distance]()

-

Right-click in the Event Graph then search for and select Update Virtual Subject Frame Data .

-

Connect the Update Virtual Subject Frame Data node to the Event On Update node.

-

Right-click the Frame Data input pin in the Update Virtual Subject Frame Data node and select Split Struct Pin . This event is triggered on every tick and will be used to update the FIZ data available for each frame.

![Add Update Virtual Subject Frame Data node]()

![Connect the Update Virtual Subject Frame Data node to the Event On Update node]()

![Right click the Frame Data pin and click Split Struct]()

-

-

Connect the Zoom variable to the Focal Length input pin.

-

Connect the Iris variable to the Aperture input pin.

-

Connect the Focus variable to the Focus Distance input pin.

-

Compile and Save the Blueprint.

![Add Update Virtual Subject Frame Data node]()

-

Section Results

In this section you created a new Live Link Virtual Subject and configured it so it can be used to manually input Focus, Iris, and Zoom to your CineCamera Actor.

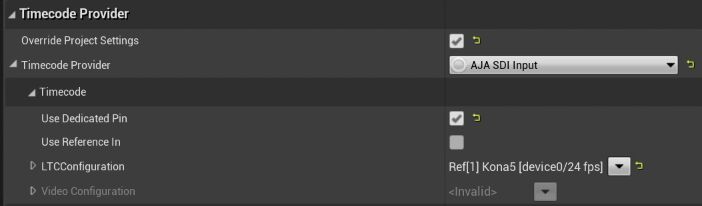

5 - Connecting your Sources to Live Link

In this section you will use Live Link to connect a Virtual Subject and an Optitrack source used to track the camera.

-

Go to Window > Live Link to open the Live Link window.

![Open the Live Link window]()

-

To add the camera tracking, click + Source > OptiTrack Source and click Create .

![Create a new OptiTrack Source]()

![The OptiTrack appears on the list]()

-

To add the Live Link Virtual Subject, click + Source > Add Virtual Subject . Select the Virtual Subject created in the previous section and click Add .

![Add a Virtual Subject]()

![Select your Virtual Subject from the list]()

-

To manually input Focus , Iris, and Zoom values, select the Virtual Camera in the Live Link window and input the values under the Default section.

![Enter the Focus, Iris, and Zoom values]()

-

Select your CineCamera Actor in the World Outliner and go to the Details panel. Click + Add Component and search for then select Live Link Component Controller . Rename the component to LiveLinkComponentControllerFiz.

![Add a Live Link Controller component]()

-

Inside the Details panel, scroll down to the Live Link section and click the Subject Representation dropdown. Select the Virtual Camera from the list.

![Add a Live Link Controller component]()

-

Repeat the previous step to add another Live Link Controller component, and rename it LiveLinkComponentControllerTracking .

-

In the Details panel, click the Subject Representation dropdown. Select the physical camera from the list. In this example, it is the Alexa_LF_A being streamed over Live Link from Motive.

![Add your camera]()

Section Results

In this section, you connected the Live Link Virtual Subject and the Optitrack Live Link to the CineCamera Actor.

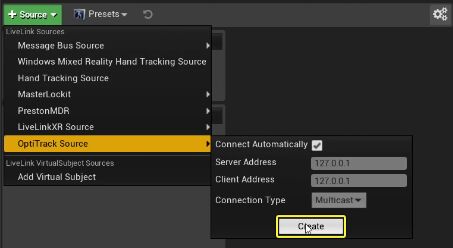

6 - Evaluating Sync and Timecode with a Timed Data Monitor (Optional)

This is an optional section for users that will use timecode and sync with the Kona 5 and want to evaluate the source inputs. You can also adjust the timing offsets and buffers to make sure the CG elements are in sync with the video source and tracking data.

-

Click Settings > Plugins to open the Plugins Menu .

![Open the Plugins Menu]()

-

Select the Virtual Production category and enable the Timed Data Monitor plugin. Select Yes on the popup box and click Restart Now to restart the editor.

![Enable the Timed Data Monitor plugin]()

-

Once the editor has reloads, click Window > Developer Tools > Timed Data Monitor to open the Timed Data Monitor window. The Timed Data Monitor is used to monitor the sync and timecode of different devices. It can also be used to synchronize devices using buffers and offsets to the incoming data by evaluating the timecode properties of the devices.

-

Change the Eval Mode to Timecode and enter your Global TC Offset value. In this example, the Global TC Offset value entered was 3 frames, to allow the synchronization between the tracking system and the Video input from the AJA. The buffer size of the tracking system was increased to 50.

![Change the Eval Mode and enter the Global TC Offset]()

The top of the window shows the incoming sync and timecode connected to the AJA that were set up in the Media Profile.

Section Results

In this section you learned how to use the Timed Data Monitor plugin to set the timecode and Global TC Offset of your Kona 5.

7 - Connecting your Preston System (Optional)

If you are using a Preston system with your production camera, follow the steps on the Connecting your Preston system guide.

8 - Connecting Smart Lens Using Master Lockit Plus (Optional)

If you are connecting a Smart lens using an Ambient Master Lockit Plus device, follow the Connecting your Master Lockit System guide.

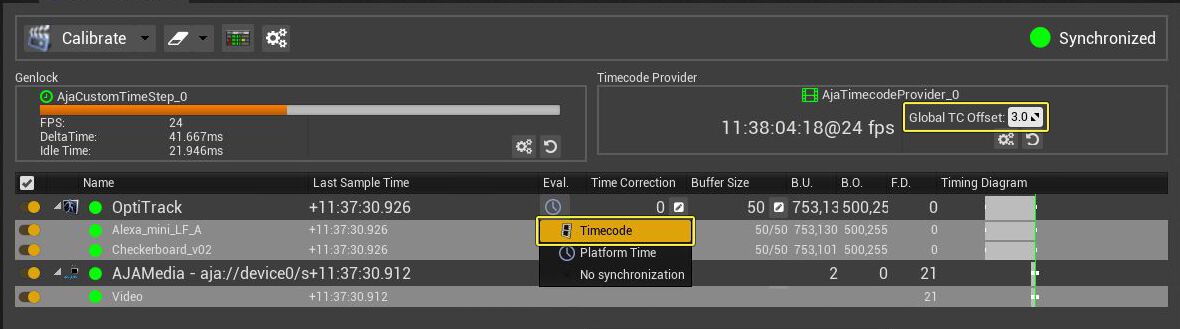

9 - Calculating Lens Distortion

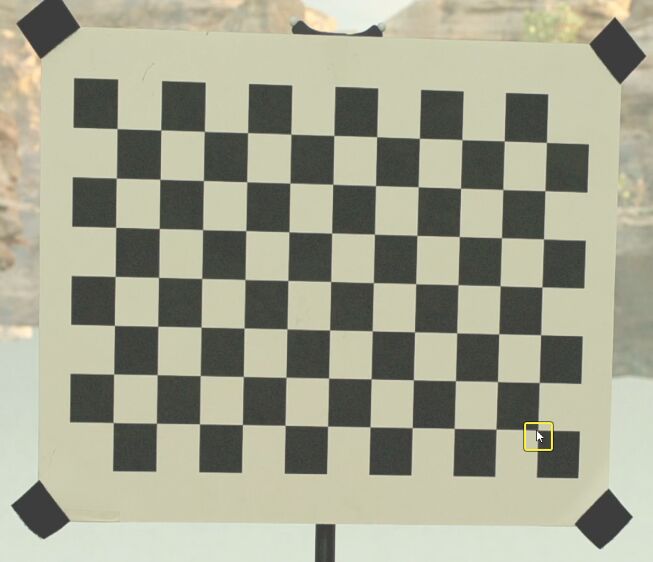

To calculate lens distortion, you will need a physical checkerboard printed and mounted on a rigid surface to hold in view of the production camera. You can also use a checkerboard image displayed on a tablet. A CG checkerboard will be created to match the properties of the physical checkerboard.

-

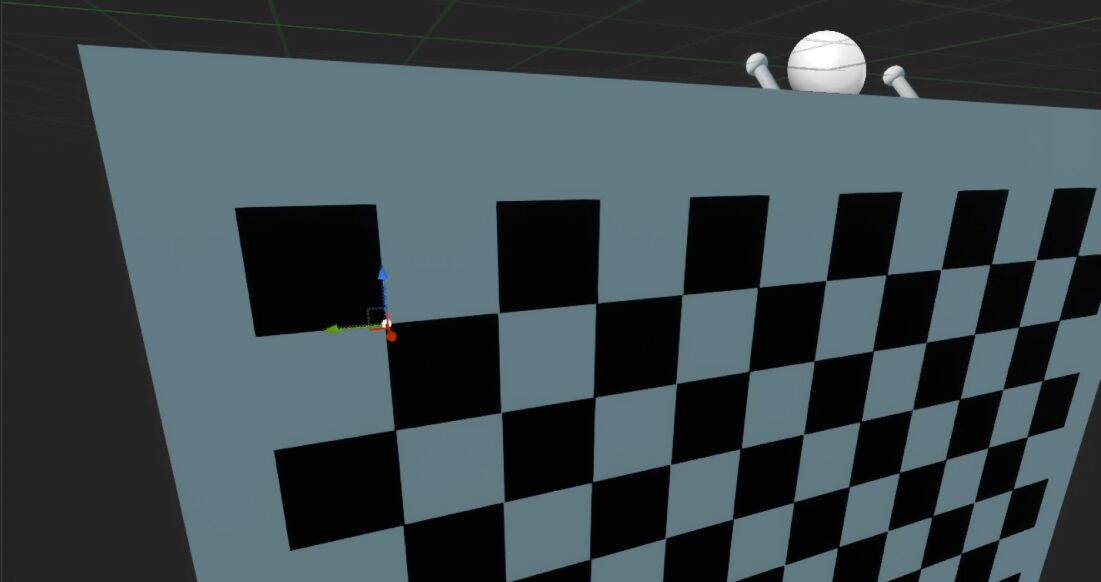

On the Place Actors tab, click the Virtual Production category and drag the Checkerboard Actor into your level.

![Add a Checkerboard actor to your level]()

-

Select the Checkerboard Actor in the World Outliner window.

-

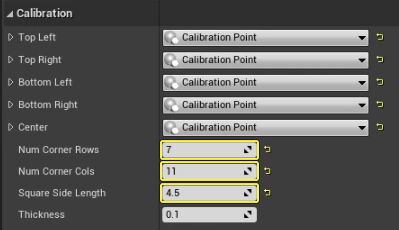

Go to the Details panel and scroll down to the Calibration section.

-

Enter the Number of Corner Rows .

-

Enter the Number of Corner Columns .

-

Enter the Square Side Length in cm.

-

These values need to match the physical Checkerboard being used.

![Add the Rows, Columns, and Square Side Length]()

-

-

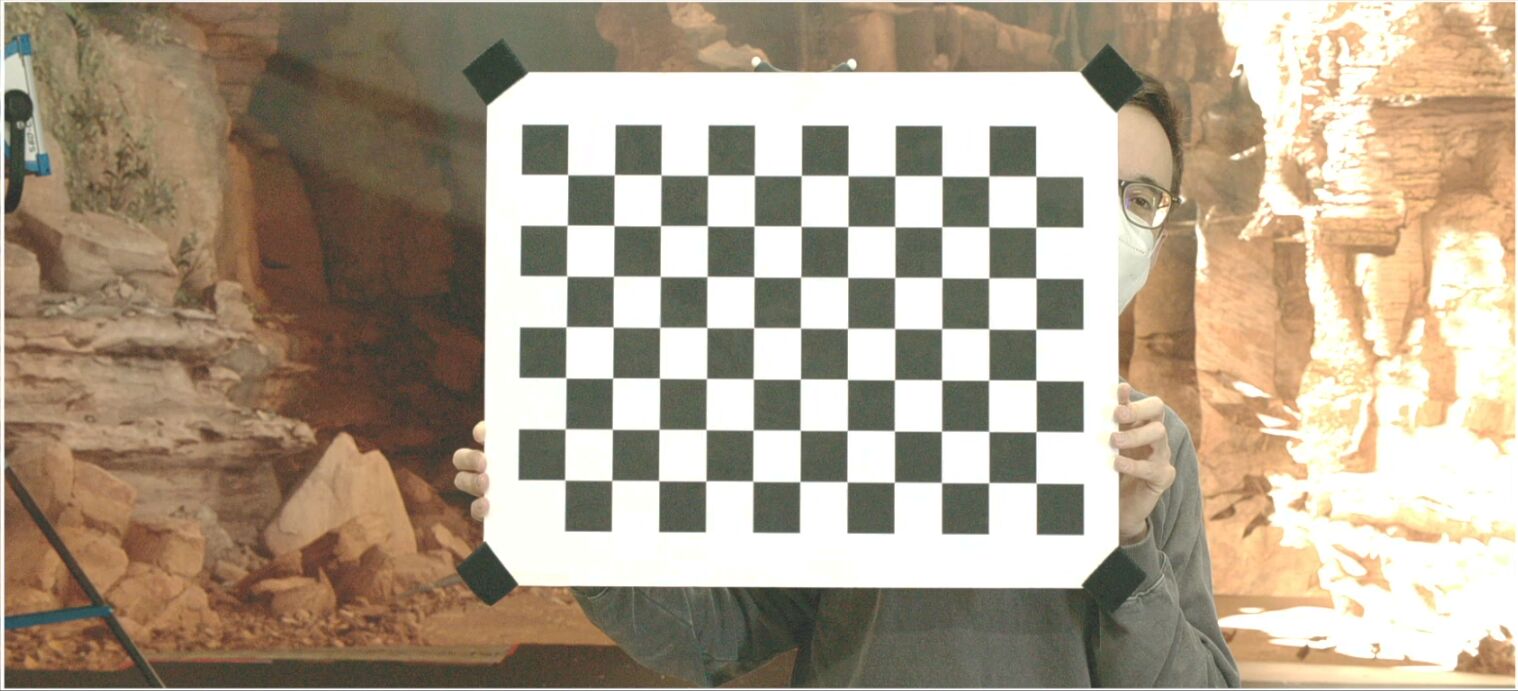

The example above uses a physical checkerboard containing 7 row corners and 11 column corners. Each square side is 4.5cm in length. This image shows the checkerboard used in the example.

![Example checkerboard used]()

-

Initially there aren't any materials assigned to the checkerboard. Click the Odd Cube Material and Even Cube Material dropdowns and select the desired Materials. If your physical checkerboard starts with a black square in the top left, then that will be the Odd Cube Material color.

![Add the Even and Odd Materials]()

-

Select your CineCamera Actor in the World Outliner window.

-

Select the Live Link Controller Component previously created to receive FIZ data.

-

Go to the Details panel, scroll down to the Camera Role dropdown, and open it.

-

In the Camera Calibration section, click the Lens File dropdown and select the Lens File you created earlier.

![Enter the Sensor Dimensions]()

-

-

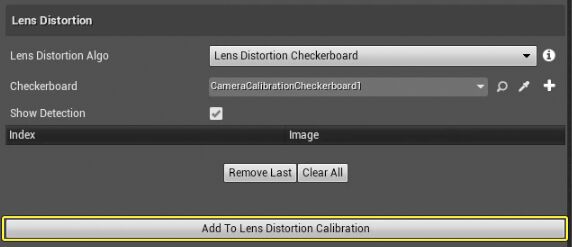

Double-click the Lens File in the Content Browser to open it and click the Lens Distortion tab.

-

Place the checkerboard in front of the camera as shown below.

![Place a checkerboard in front of the camera]()

-

Go to the Lens Distortion section.

-

Click the Lens Distortion Algo dropdown and select Lens Distortion Checkerboard .

-

Click the Checkerboard dropdown and select the Checkerboard Actor created earlier.

-

Enable the Show Detection checkbox. This will create a preview image each time an image is created for use in calculating lens distortion.

![Select the distortion algorithm and checkerboard actor]()

-

-

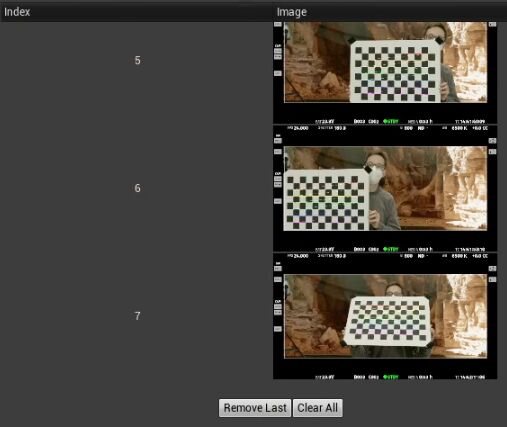

Click on the viewport to start the calibration process and create the first image to be used.

![Place a checkerboard in front of the camera]()

-

Move the Checkerboard around the camera field of view and continue to click the image to capture a calibration picture. Make sure to move the Checkerboard's location enough times to cover the field of view with overlapping images.

![Take several pictures of the checkerboard at different locations and angles]()

-

After collecting enough images (minimum of 4 is required), click Add To Lens Distortion Calibration , then click OK on the Lens Calibration Info dialog box.

![Use the Lens Distortion Checkerboard algorithm]()

![Click OK to confirm the Lens Calibration Info]()

-

You will now see the Distortion Parameters update at the bottom of the window.

![Distortion Parameters update in the window]()

Most lenses will have different distortion values at different focus distances. To make the most accurate Lens file for lens distortion, the process above should be repeated at different focus distances.

Section Results

In this section, you set up your CG checkerboard to match your physical checker board, captured images of your physical checkerboard, and used this to add lens distortion calibration data to your lens file and camera. Optionally, you created a distortion profile that changed over different focus distances.

10 - Confirming Lens Distortion

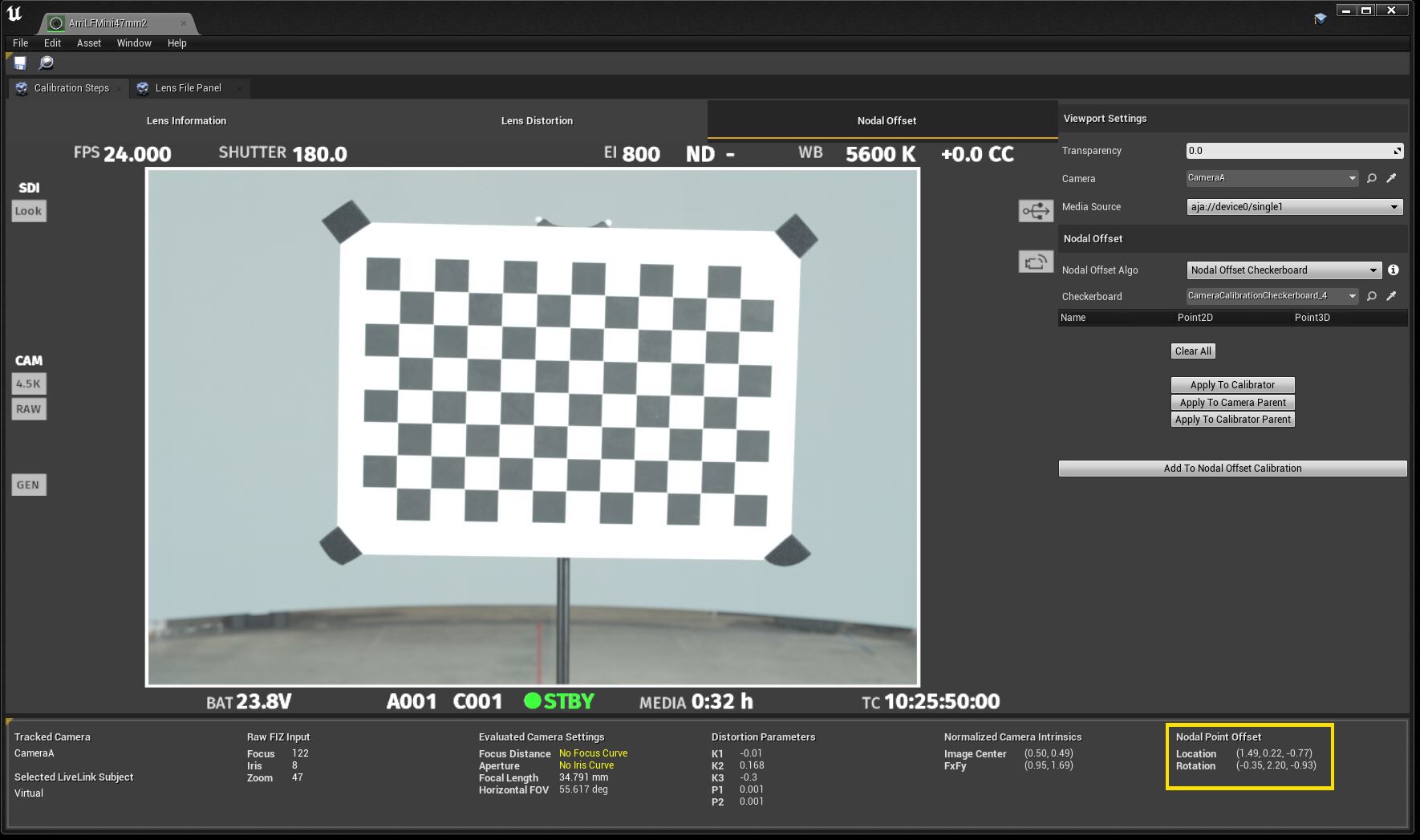

To check the lens Distortion, you can use the Nodal Offset tab. In this case the untracked CG checkerboard in your scene will be moved to the camera and will overlay the video feed. If the CG overlay matches the Video feed the calculation is visually confirmed.

-

Click the Nodal Offset tab and go to the Nodal Offset section.

-

Click the Nodal Offset Algo dropdown and select Nodal Offset Checkerboard .

-

Click the Checkerboard dropdown and select your Checkerboard Actor .

![Set your Nodal Offset Algo and Checkerboard]()

-

-

Click the image to populate all the corner data from the calibration. Click Apply To Calibrator . This will move the CG Checkerboard actor to the camera.

![Click Apply to Calibrator]()

-

Go to the Viewport Settings section and change the Transparency value from 0 to 1 to verify the Checkerboard Actor matches the physical checkerboard in the camera viewport.

![Verify the Checkerboard Actor matches the physical checkerboard]()

Section Results

In this section, you verified the lens distortion calculation by matching the CG Checkerboard Actor to the physical checkerboard in the camera's viewport.

11 - Creating a Tracked Object for Nodal Offset Calibration

The nodal offset accounts for the difference between the axis of the tracked camera and the axis of the actual nodal point of the lens/camera combination being calibrated. If the lens is changed or the tracking device on the camera is moved, a new nodal point offset will need to be calculated.

In order to calculate the nodal offset to be applied to the Cine Camera, you will need to create a physically tracked object. A CG model needs to be made of this tracked object. In this example, the physical checkerboard that was used previously had tracking markers added to it. A CG model was created to match the tracked checkerboard and was set up for optical tracking. The CG checkerboard is imported into UE for use in the next steps.

While using a checkerboard is common, you can create any tracked object and CG model to suit your needs. The process will include adding calibration points to your CG model. Your tracked object and CG model need to be set up correctly to match each other.

-

Right-click in the Content Browser and select Blueprint Class under the Create Basic Asset section.

-

Click Actor on the Pick Parent Class window.

-

Name the Blueprint Checkerboard_TRK .

![Create a new Blueprint Class]()

![Select the Actor parent class]()

-

-

Double-click the Checkerboard_TRK Blueprint in the Content Browser to open it.

-

Go to the Components window and click + Add Component .

-

Search for then select Static Mesh .

![Add a Static Mesh component]()

-

-

Go to the Details panel and scroll down to the Static Mesh section. Click the Static Mesh dropdown and search for then select Checkerboard_mesh from the list.

![Add the Checkboard_mesh Static Mesh]()

-

With the Static Mesh component selected, go to the Components window and click + Add Component .

-

Search for then select Calibration Point .

-

Name the component Top_Left .

![Add a Calibration Point component and name it Top_Left]()

-

-

Inside the Viewport , place the Top_Left component on the top left point in the checkerboard, as seen below.

![Place the Top_Left component on the top left location on the checkerboard]()

-

Duplicate the Top_Left component four times and name the new copies Bottom_Left , Bottom_Right , Top_Right , and Center . Place those components in their corresponding locations on the checkerboard mesh. Compile and Save the Blueprint.

![Place all four components on their corresponding locations]()

-

Drag the Checkerboard_TRK Blueprint to your level and go to the Details panel.

-

Click + Add Component and search for then select Live Link Controller .

-

Select the Live Link Controller component and scroll down to the Live Link section.

-

Click the Subject Representation dropdown and select Checkerboard .

![Add a Live Link Controller component]()

![Add the Checkerboard as the Subject Representation]()

-

Section Results

In this section you created a new Checkerboard Actor and added five Calibration Points - Top Left, Bottom Left, Bottom Right, Top Right, and Center.

12- Calculating Nodal Offset

The nodal offset applies an offset to the CG Cine camera to account for the actual nodal point of the lens being used on the physical camera.

The Camera Calibration plugin calculates the nodal offset by having the tracked object move to different positions in front of the physical production camera. In this example, the Checkerboard Actor has calibration points which are collected and used to calculate the nodal offset.

-

Double-click the Lens File in the Content Browser to open it.

-

Click the Nodal Offset tab and go to the Nodal Offset** section.

-

Click the Nodal Offset Algo dropdown and select Nodal Offset Points Method .

-

Click the Calibrator dropdown and select the Checkerboard_TRK Actor.

![Select the Nodal Offset Points Method and the Checkerboard]()

-

-

Click on the checkerboard image in the viewport to match the location of each Calibration Point. For example, click the bottom right corner of the checkerboard to match the Bottom_Right Calibration Point .

![Click the Bottom Right corner on the checkerboard]()

The Calibration Points will update automatically as you click on the image.

-

Move the tracked checkerboard to 5 to 8 different positions in front of the camera and acquire a set of calibration points at each position.

-

When you are finished, click Add To Nodal Offset Calibration .

-

Check that the nodal offset has been applied correctly by checking the properties of nodal offset applied.

![Check the properties on the bottom right corner of the window]()

-

Change the transparency from 0 to 0.5. The CG element of the tracked checkerboard should overlay exactly the checkerboard in the live video.

![Change the transparency to see how the CG element overlays with the tracked checkerboard]()

-

Pan the tracked camera and move it around the tracked checkerboard and the CG version should stay correctly superimposed over the physical checkerboard in the live video feed.

-

If you did not set up a timecode and genlock, and adjusted synchronization using the Timed Data Monitor, there may be lag in the CG element as you move. When you stop moving the camera, the CG element will line up again.

Section Results

In this section you collected calibration point values by clicking the checkerboard image to match each calibration point. After enough calibration points were collected by moving the tracked checkerboard around, you applied the nodal offset to the camera parent. Lastly, you verified that the nodal offset was correct by checking that the CG version of the checkerboard was overlaid on the real version in the video source correctly.

If you are calibrating an LED wall, follow the steps in the Aligning the LED Wall to Camera Tracking using Arucos guide.